In March 2026, we aren’t just writing code anymore—we are orchestrating a fleet of reasoning agents through a new generation of high-performance, native-speed infrastructure.

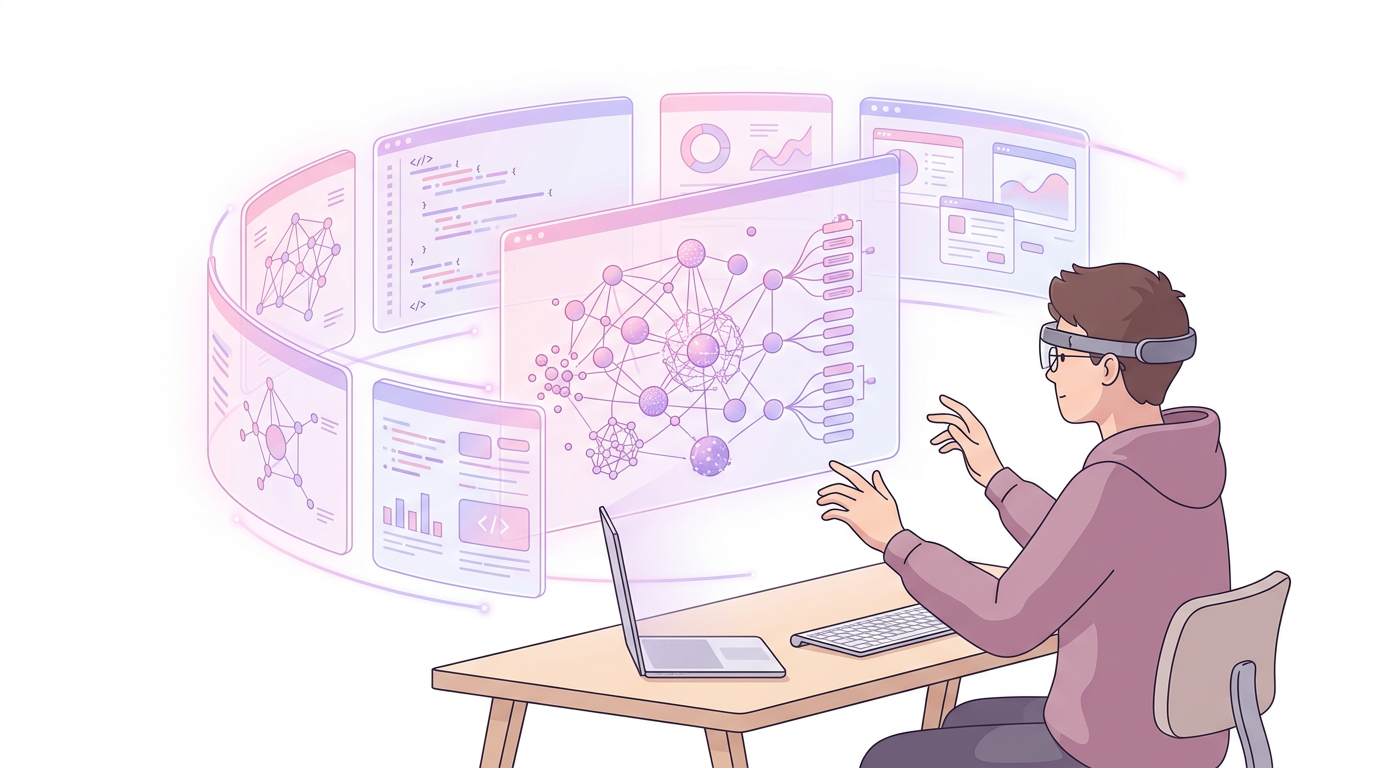

It is 8:45 AM on a Tuesday in March 2026. As you settle into your workstation—perhaps donning the significantly lighter Apple Vision Pro 2—you don’t start your day by checking Jira or scrolling through Slack. Instead, you open your Perplexity Computer Agent dashboard. While you were sleeping, the agent identified a memory leak in the production edge functions, cross-referenced the telemetry with the new Next.js 16.1 Build Adapters, and utilized GPT-5.4’s Extreme Reasoning mode to draft a three-step migration plan.

It didn't just "suggest" code. It reasoned through the architectural implications for the next six hours, ran a localized simulation of your entire stack using the Model Context Protocol (MCP), and is now waiting for your cryptographic signature to deploy the hotfix to your sovereign cloud. This is the reality of the "Great Transition."

For the last decade, we have treated development as a manual craft assisted by tools. In March 2026, the paradigm has inverted. Development is now a high-level architectural governance task, where the "work" is done by agents operating on compilers that run at native speeds. If you are still treating your AI as a fancy autocomplete, you are effectively using a supersonic jet to taxi down a driveway.

Section 1: The Reasoning Gap and the Death of "Hallucination"

For years, the biggest critique of AI-assisted development was the "hallucination" factor. We spent as much time debugging the AI’s suggestions as we did writing the code ourselves. That era ended abruptly this month with the release of GPT-5.4 Pro and DeepSeek V4.

The industry has moved beyond "Probabilistic Next-Token Prediction" into the era of Extreme Reasoning. When you toggle "Reasoning Mode" in your IDE today, the model doesn't just respond instantly. It "thinks." It might take 30 seconds or 30 minutes, depending on the complexity of the task. During this time, it is exploring tree-of-thought branches, verifying its own logic against the TypeScript 7.0 type-checker, and ensuring that the proposed solution doesn't violate the architectural constraints defined in your project’s MCP configuration.

This shift matters because it changes our fundamental "unit of work." We are no longer asking for functions; we are defining Intents.

The Old Way (2024): "Write a React component that fetches user data and handles loading states." The New Way (2026): "Integrate the user-onboarding flow with the new tiered KV cache storage in DeepSeek V4, ensuring zero-latency state persistence across the spatial persona session."

Section 2: TypeScript’s "Go" Moment (Project Corsa)

The most significant technical shift for web developers this month is the release of the TypeScript 6.0 Release Candidate. While 6.0 brings several syntax refinements, its primary purpose is to serve as the "sunset version" for the JavaScript-based compiler.

Microsoft has officially unveiled Project Corsa, which is the internal codename for TypeScript 7.0. For the first time since its inception, TypeScript is being rewritten from the ground up in Go.

Why the move to Go? As our codebases have ballooned and our AI agents have begun generating thousands of lines of code per minute, the V8-based TypeScript compiler hit a physical ceiling. We needed true multi-threading and native memory management to handle the massive 1-million-token context windows of modern LLMs.

Comparison: The Evolution of the Compiler

| Feature | TypeScript 5.x (Legacy) | TypeScript 7.0 (Project Corsa) |

|---|---|---|

| Engine | Node.js (Single-threaded) | Go (Native Multi-threaded) |

| Build Speed | Linear / Bottlenecked | Parallelized / Near-Instant |

| Memory Usage | High (Garbage Collection overhead) | Low (Direct Memory Management) |

| AI Integration | External LSP | Native MCP Support |

| Type Checking | Lazy / Incremental | Real-time / Global |

The performance leap is staggering. A monorepo that previously took 4 minutes to type-check now completes in under 12 seconds. This isn't just a convenience; it's a requirement for the Agentic Era. If an AI agent needs to iterate on a solution ten times, a 4-minute wait is an eternity. With Project Corsa, the agent gets feedback in milliseconds.

Code Example: Leveraging Complex Types in the Native Era

In the 6.0 RC, we are seeing the introduction of "Native Hints" that help the upcoming 7.0 compiler optimize memory. Here is how we define a high-performance state-machine that the Go-native compiler can optimize:

typescript// March 2026: High-performance State Machine for Agentic Workflows // Utilizing TypeScript 6.0 RC "Explicit Variance" and "Native Hints" type WorkflowState = "idle" | "reasoning" | "executing" | "verified"; /** * @native_optimize * Instructs the Project Corsa compiler to allocate this * on the stack rather than the heap where possible. */ interface AgentTask<T extends WorkflowState> { readonly id: string; readonly status: T; // New 6.0 syntax for explicit type-level metadata metadata: { [key: string]: any } & { "engine": "gpt-5.4-pro" }; } // Complex recursive conditional types that previously lagged the IDE type DeepAgentResolve<T> = T extends Promise<infer U> ? DeepAgentResolve<U> : T extends object ? { [K in keyof T]: DeepAgentResolve<T[K]> } : T; async function processTask<S extends WorkflowState>(task: AgentTask<S>): Promise<DeepAgentResolve<AgentTask<"verified">>> { console.log(`"Processing task ${task.id} in state: ${task.status}"`); // The Go-native compiler handles this recursion 10x faster const result = await agentEngine.execute(task); return result as DeepAgentResolve<AgentTask<"verified">>; }

Section 3: Next.js 16.1 and the "Open Cloud" Revolution

For years, the running joke in the community was that Next.js was "Vercel’s proprietary OS." With the release of Next.js 16.1 in January 2026, that narrative has been dismantled. The introduction of the Build Adapters API has decoupled the framework from the hosting provider entirely.

This move was driven by the rise of Sovereign AI. In 2026, enterprise companies no longer want to send their proprietary logic to a centralized cloud. They are running local instances of DeepSeek V4 or Llama 4 on private clusters. Next.js 16.1 allows you to write a single application and, via a simple adapter, deploy it to a private Kubernetes cluster, a decentralized "Edge" network, or even a disconnected "Air-gapped" environment.

The Model Context Protocol (MCP) Integration

Perhaps the most "2026" feature of Next.js 16.1 is its native support for MCP. MCP is the standardized bridge that allows AI agents to "see" into your application's guts.

By adding an mcp.config.json to your project, you give your AI agent permission to inspect your local database schema, read your environment variables (securely), and even look at the current state of a user's session in the browser.

javascript// next.config.js - March 2026 Stable Version const nextConfig = { experimental: { // Enable the Build Adapters API for non-Vercel deployments adapter: "@adapters/aws-lambda-sovereign", // Native MCP integration for AI-assisted debugging mcp: { enabled: true, allowFileSystemAccess: ["./src/components", "./src/lib"], capabilities: ["read-schema", "execute-tests", "trace-logs"], agentEndpoint: "https://api.perplexity.ai/computer-agent/v1" } }, // Tailwind v4.1 "Oxide" engine integration compiler: { tailwind: true } }; export default nextConfig;

Section 4: From Copilots to Agents (The MCP Era)

The word "Copilot" feels quaint in 2026. A copilot sits next to you and offers suggestions; an Agent takes the controls while you sleep.

The Perplexity "Computer" Agent, released earlier this month, is the gold standard of this shift. At $200/month, it isn't cheap, but it effectively replaces a junior developer. Because it supports MCP and has direct OS-level access, it can coordinate across 19 different models to solve a problem.

Imagine you have a bug where a CSS transition is stuttering on the Apple Vision Pro 2.

- The Agent uses Gemini 3.1 Flash-Lite to quickly scan your logs.

- It uses GPT-5.4 to reason about the frame-rate drop in visionOS 26.

- It uses the Tailwind CSS v4.1 Oxide engine to rewrite the utility classes for hardware-accelerated transforms.

- It boots up a local simulator, verifies the fix, and sends you a video of the smooth transition for approval.

Analogy: The Orchestra Conductor

Think of the developer's role in 2024 as the first violinist—you were in the thick of the music, playing every note, occasionally looking at the sheet music (AI) for help.

In 2026, you are the Conductor. You aren't playing the instruments; you are setting the tempo, interpreting the "Intent" of the composer, and ensuring that the various sections (the agents) are in harmony. If the "Violin Agent" (the frontend specialist) is out of sync with the "Cello Agent" (the database specialist), you intervene. But you aren't holding the bow anymore.

Section 5: Spatial Computing as the New Dev Terminal

We cannot talk about March 2026 without mentioning the Apple Vision Pro 2. The first generation was a heavy, expensive prototype. The V2, powered by the M5 chip, has finally hit the "8-hour comfort" threshold.

With visionOS 26, the "Spatial Persona" has become the new "Pair Programming." I can now sit in a virtual office with a colleague in London, and we can both "reach out" and manipulate the same 3D representation of our system architecture.

The integration of Nvidia Inference Chips directly into the workstation setup means that my headset isn't just a display; it's a localized AI powerhouse. I can run a 70B parameter model locally with zero latency, providing a "Jarvis-like" assistant that watches my gaze. If I linger too long on a complex block of code, the assistant automatically brings up the relevant documentation and a "Reasoning Trace" of why that code was written that way.

Practical Application / Common Mistakes

Transitioning to the Agentic Era isn't just about upgrading your package.json. It requires a fundamental shift in how you structure your code.

1. Mistake: Ignoring TypeScript 6.0 Deprecation Warnings

The 6.0 RC is very vocal about "non-portable types." If you have types that rely on obscure Node.js internals or non-standard JS behavior, the Go-native 7.0 compiler will simply fail.

Tip: Run npx ts-migrate-7 --check today to see how much of your codebase is "Corsa-ready."

2. Mistake: "Chat-Oriented" Development

If you are still typing long prompts into a chat box, you are wasting time. Tip: Start modularizing your logic for MCP consumption. Write "Agent-Readable" documentation. Use standard schemas for your data. The goal is to let the agent "discover" your code, not have you explain it.

3. Mistake: Over-reliance on "Extreme Reasoning"

GPT-5.4 Pro is expensive and slow. Tip: Use a "Tiered AI" strategy. Use Gemini 3.1 Flash-Lite for unit tests and linting (cheap and fast). Save the GPT-5.4 Extreme Reasoning for architectural changes and complex bug hunting.

4. Common Pitfall: The "Black Box" Agent

Developers often let agents run migrations without reviewing the "Reasoning Trace." Tip: Always inspect the "Tree of Thought" before hitting deploy. Just because the code passes the type-checker doesn't mean it aligns with your long-term business logic.

Final Thoughts

March 2026 is the month we finally stopped talking about "The Future of Work" and started living in it. The "Great Transition" has effectively removed the "Syntax Barrier." In a world where TypeScript 7.0 compiles at the speed of thought and GPT-5.4 can reason through multi-layered engineering problems, the only remaining bottleneck is Human Intent.

We are no longer limited by how fast we can type or how many API docs we can memorize. We are limited only by the clarity of our vision and our ability to orchestrate the intelligence at our fingertips.

The "Syntax Error" is dead. Long live the "Architectural Insight." Are you ready to stop being a coder and start being an orchestrator? The agents are waiting for your instructions.