Python in the Age of Agents: Why the World’s Most Popular Language is Just Getting Started

For the last decade, a recurring prophecy has circulated in the halls of computer science: "Python is too slow for the future." Critics pointed to its Global Interpreter Lock (GIL), its humble execution speeds, and its dynamic nature as evidence that a more "serious" language—like Rust, Go, or even the newcomer Mojo—would eventually seize the crown.

Yet, as we move through 2025 and look toward 2026, the opposite has happened. Python isn’t just surviving; it is undergoing a radical metamorphosis. It has evolved from a "beginner’s scripting language" into the high-performance orchestration layer of the Agentic AI era.

While JavaScript and TypeScript are winning the battle for the "Agentic Frontend" (powering the interfaces and WebMCP connections we use to interact with AI), Python remains the undisputed king of the Agentic Brain.

1. The Performance Renaissance: Python 3.13+ and the Death of the GIL

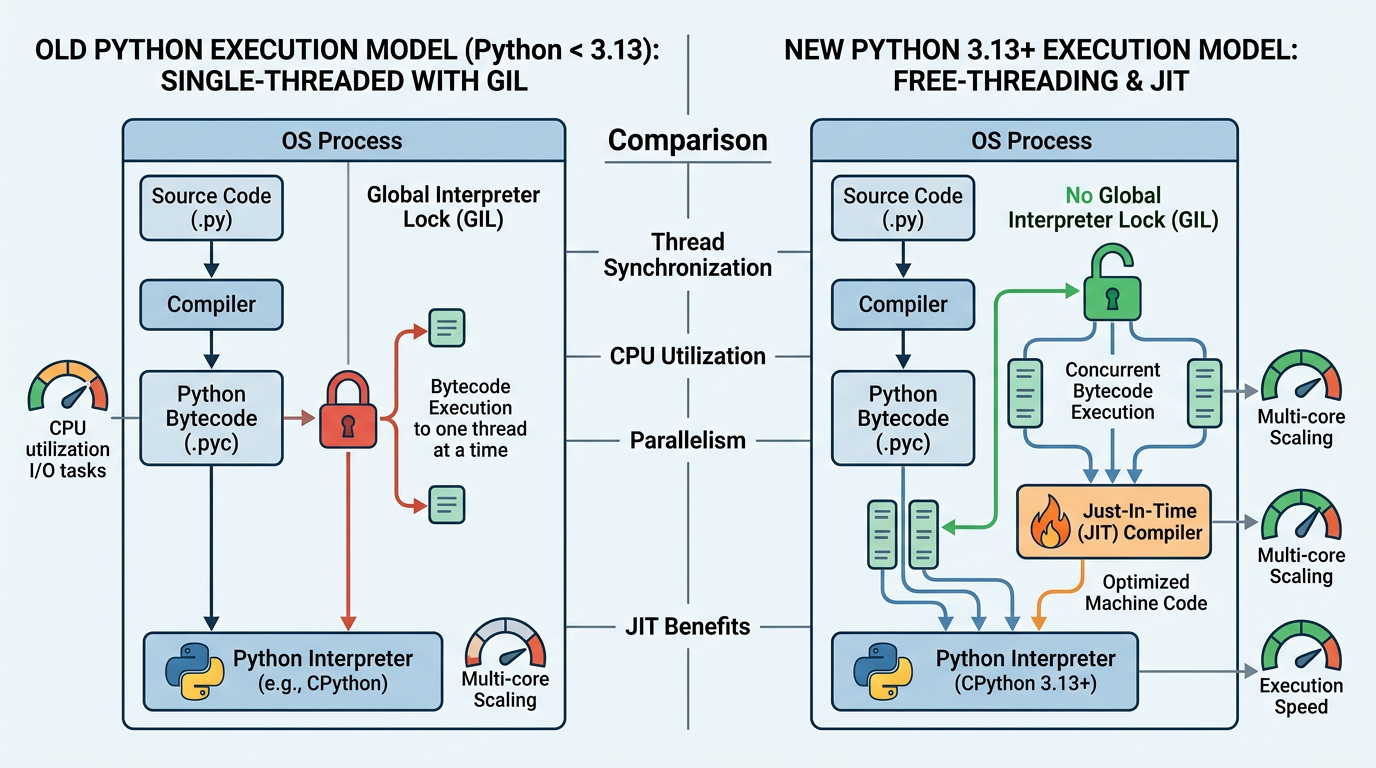

The most significant barrier to Python programming at scale has historically been the Global Interpreter Lock (GIL). The GIL prevented Python from taking full advantage of multi-core processors, forcing developers to use complex multiprocessing workarounds for parallel tasks.

With the release of Python 3.13 and the roadmap for 3.14, that barrier is finally crumbling.

Breaking the Lock: Free-threaded Python

Python 3.13 introduced an experimental "Free-threaded" mode. By allowing the interpreter to run without the GIL, Python can now execute threads in true parallel across multiple CPU cores. For AI agents, this is a game-changer. An agentic system often needs to perform multiple "thought loops" simultaneously—querying a database, searching the web, and processing an LLM response all at once. Free-threading allows these agents to scale vertically on a single machine like never before.

The JIT Revolution

Alongside the GIL removal, the introduction of a Just-In-Time (JIT) compiler in Python 3.13 is narrowing the performance gap between Python and compiled languages like Java. The JIT compiler transforms parts of the Python code into machine code on the fly, significantly speeding up tight loops and heavy computational logic.

Why this matters for Developers: You no longer have to rewrite your performance-critical AI logic in C++ or Rust. You can keep your "Agentic Brain" in Python, maintaining readability without sacrificing the speed required for real-time agentic reasoning.

2. From Scripting to Engineering: The Pydantic Revolution

In the early days of Python, the language's "loose" nature was its biggest draw. But for building reliable AI agents, "loose" is dangerous. An agent that outputs a malformed JSON string can break an entire production pipeline.

The industry has responded by moving away from "string-heavy" prompts toward structured data engineering. This shift is powered by Pydantic v2.

Type Safety in a Dynamic World

Python’s Type Hinting system has matured into a robust toolset. By using Pydantic—which is now powered by a blazing-fast Rust core—developers can enforce strict data schemas. When an AI agent generates an output, Pydantic validates it instantly. If the data doesn't match the schema, it's caught before it can cause downstream errors.

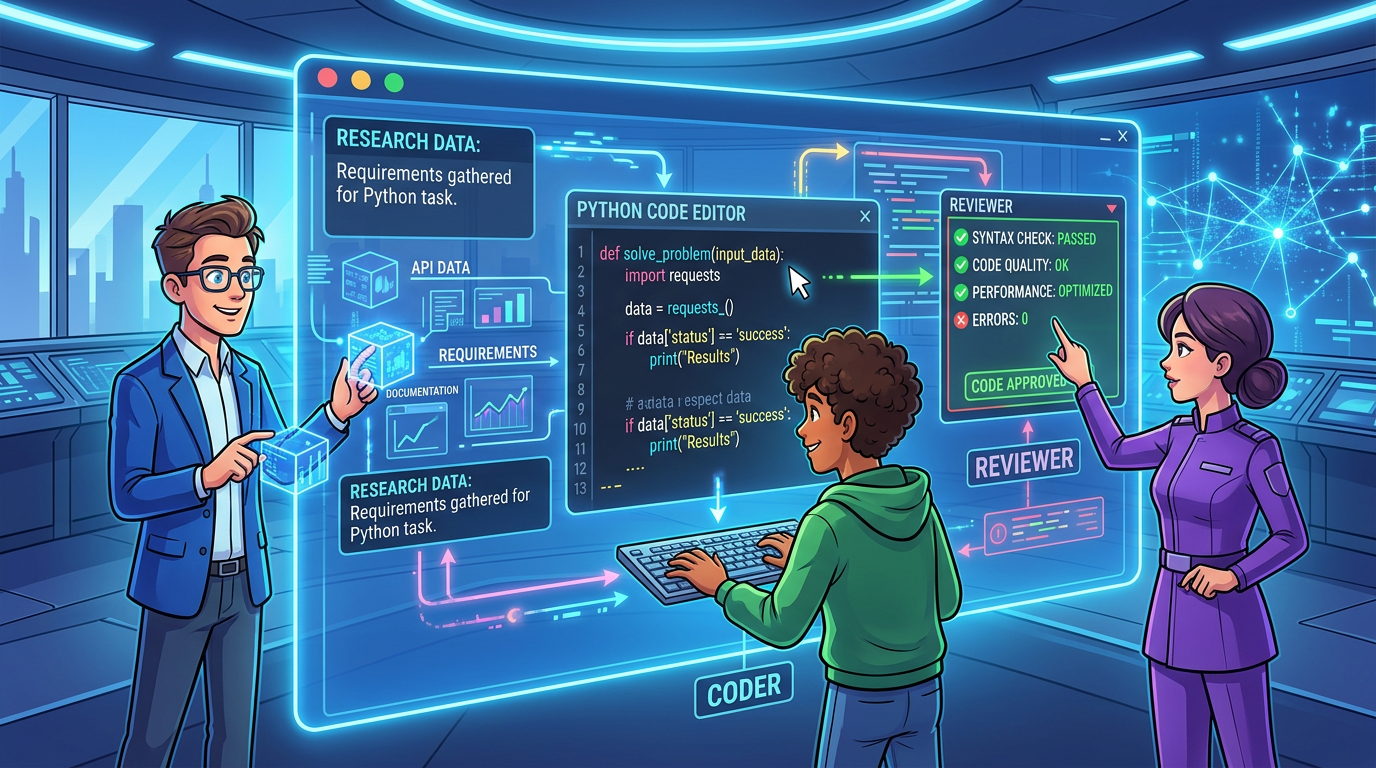

The Rise of PydanticAI

We are seeing a move away from legacy frameworks toward PydanticAI. While older frameworks often felt like "black boxes," PydanticAI treats LLM interactions as standard Python functions with typed inputs and outputs. This makes Python backends as robust as those written in Go or Java, which is critical when agents are tasked with handling financial transactions or sensitive user data.

3. The Agentic Ecosystem: Why Python is the "Brain" Language

If you look at the most successful Agentic AI frameworks today, they share a common DNA: they are built to leverage Python’s massive library "moat."

Framework Spotlight:

- LangGraph: Developed by the LangChain team, LangGraph is designed for complex, circular agent workflows. It treats agent interactions as a graph, allowing for the "loops" and "cycles" of reasoning that linear chains can't handle.

- CrewAI: This framework focuses on multi-agent orchestration. It allows you to define "crews" of agents (e.g., a Researcher, a Writer, and a Manager) that work together to solve complex tasks.

- PydanticAI: The new standard for type-safe LLM interactions. It is built for developers who want the reliability of a compiled language with the expressive power of Python.

The Library Moat

The reason Python remains the "gravity well" for AI talent is simple: the ecosystem. Every major AI breakthrough—from PyTorch and TensorFlow to HuggingFace’s Transformers—is built in or for Python. When a new paper is published on arXiv, the reference implementation is almost always Python. This creates an insurmountable advantage; even if a faster language emerges, it won't have the decades of optimized mathematical and AI libraries that Python possesses.

4. Python vs. The Challengers: Mojo, Rust, and TypeScript

Is Python’s dominance under threat? Let’s look at the primary contenders.

- Mojo: Modular’s Mojo is marketed as a "superset of Python" that is as fast as C. While Mojo is impressive, it is currently a niche tool. Python isn't being replaced by Mojo; rather, Python is absorbing Mojo’s ideas (like better memory management and JIT compilation).

- TypeScript: In our previous discussions on WebMCP, we noted that TypeScript is winning the "Agentic Frontend." TS is perfect for building the UI and handling the asynchronous nature of the web. However, for the "Agentic Backend"—where heavy data processing, tensor manipulation, and complex orchestration live—TypeScript lacks the deep AI library support that Python offers.

- Rust: Rust is increasingly the "engine" under Python’s hood. Tools like

uv, Pydantic, and Polars are written in Rust but used via Python. This is the ultimate "win-win": Rust provides the safety and speed, while Python provides the developer-friendly interface.

The Verdict: Python’s "readability as a first-class citizen" makes it the best language for prompt-heavy codebases. In the Agentic Era, the code is the logic, and Python is the closest thing we have to "executable English."

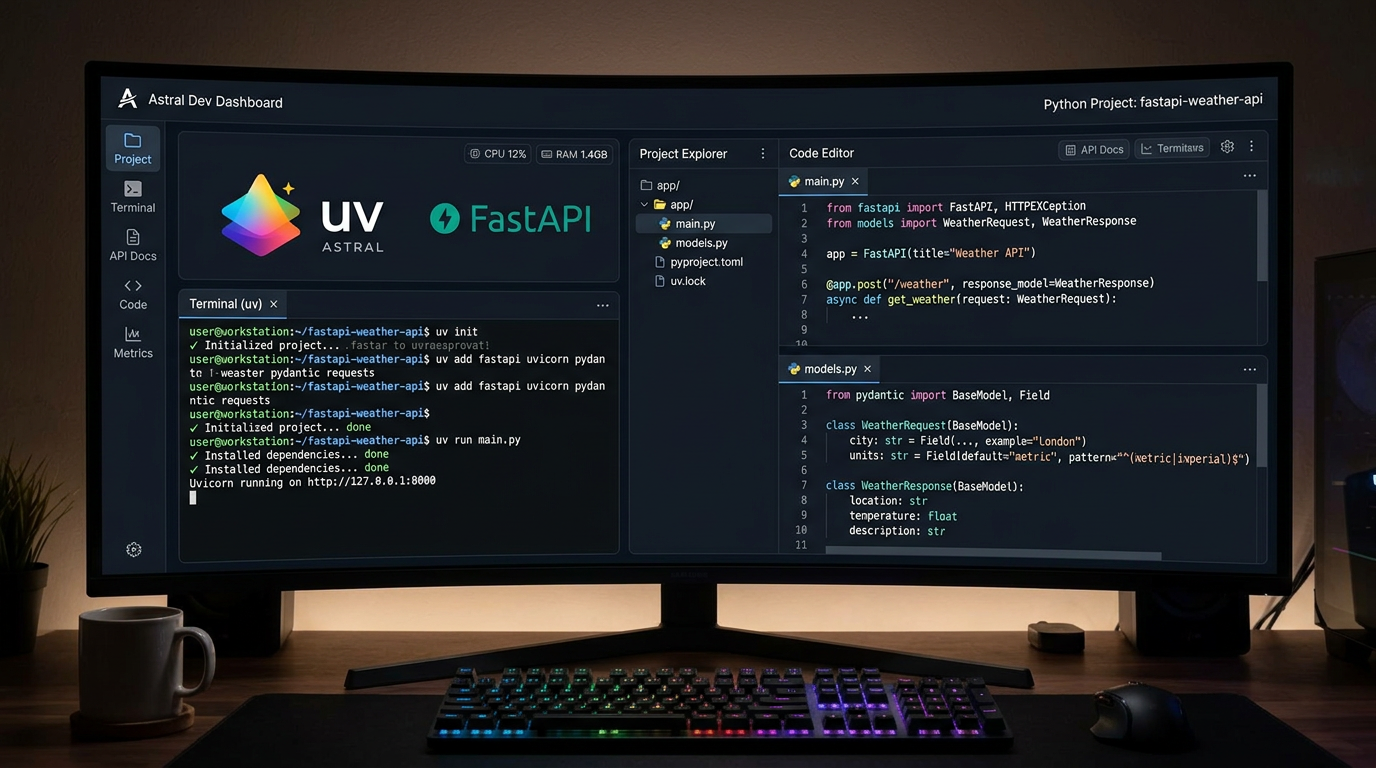

5. Getting Started with "Modern" Python (2026 Workflow)

If you are starting a project in 2025 or 2026, your Python programming workflow should look very different than it did three years ago.

The Modern Stack:

- Environment Management: Forget

pipandconda. The new king isuv. Written in Rust,uvis an ultra-fast package manager that can install dependencies in milliseconds and manage Python versions effortlessly. - The Core: Use Python 3.13+ to take advantage of the JIT and improved error messages.

- The API: FastAPI remains the gold standard for building the interfaces that agents use to communicate.

- The Logic: Use PydanticAI or LangGraph for your agent orchestration.

Best Practices for "Agent-Ready" Code:

- Embrace Async: AI agents spend a lot of time waiting for LLM responses. Writing asynchronous code (

async/await) is no longer optional; it is a requirement. - Strict Typing: Use

mypyorpyrightto enforce type safety. This ensures your agents are predictable. - Modular Prompts: Don't hardcode prompts. Treat them as data structures that can be versioned and validated.

Conclusion: The Future is Pythonic

Python isn't staying relevant by accident; it is evolving to meet the specific demands of the Agentic Era. By removing the GIL, introducing JIT compilation, and embracing Rust-powered tooling, Python has silenced the critics who claimed it couldn't scale.

If you are building the "Self-Healing Frontends" or the "Autonomous Research Agents" of tomorrow, Python is your foundation. It is the language that connects the raw power of the LLM to the structured world of software engineering.

The "Agentic Brain" is here, and it speaks Python.

Are you ready to build your first Agentic stack? Subscribe to our newsletter for deep dives into PydanticAI, LangGraph, and the future of autonomous systems.