How Python 3.14.3 and a Rust-powered ecosystem transformed the world’s most popular language from a "slow" scripting tool into a high-performance AI orchestrator.

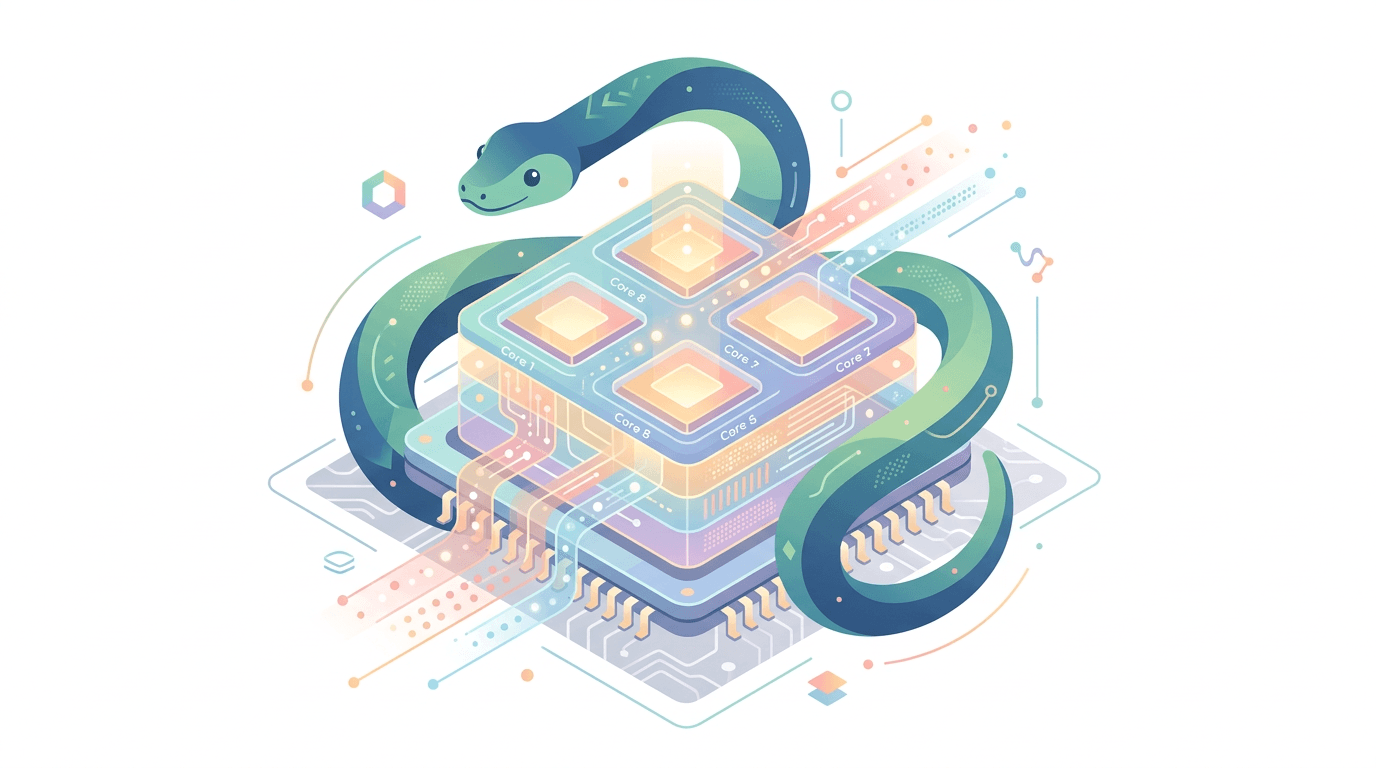

Imagine it’s Tuesday morning in March 2026. You don’t start your day by wrestling with dependency conflicts or writing hundreds of lines of boilerplate CRUD operations. Instead, you open your IDE and describe a complex, multi-agent data pipeline to your AI orchestrator in natural language. This is "Vibe Coding"—a term that has gone viral this year to describe the shift from syntax-heavy development to intent-based engineering. Within seconds, your environment, powered by Python 3.14.3, generates a thread-safe, high-performance solution that leverages all sixteen cores of your workstation.

For over two decades, the "Python is slow" and "Python has a Global Interpreter Lock (GIL)" arguments were the standard critiques from systems engineers. But as of this month, those arguments haven’t just aged poorly—they’ve been rendered obsolete. We are currently living through what the community calls the "Python Renaissance." This isn't just a minor update; it is a fundamental transformation of the language’s internal architecture and its surrounding ecosystem.

In this post, we’ll dive deep into the state of Python as of March 2026, exploring the "No-GIL" revolution, the complete "Rust-ification" of our tooling, and how the rise of Agentic AI has cemented Python as the indispensable language of the next decade.

Section 1: The Context — Why Python Matters More Than Ever in 2026

In early 2025, the Python community faced a critical crossroads. There was a vocal movement to switch to Calendar Versioning (CalVer) via PEP 2026, which would have renamed this year's releases to things like "Python 3.26." However, the Python Software Foundation (PSF) ultimately rejected the proposal to maintain stability and avoid breaking the millions of scripts that rely on standard version-parsing logic.

This decision signaled a broader theme for 2026: Internal Revolution, External Stability. While the version number remains in the 3.x sequence, the engine under the hood has been entirely rebuilt. Python has moved away from being a language you use "despite" its performance issues to being the language you use "because" of its orchestration capabilities.

The primary driver for this shift is the explosion of Agentic AI. We have moved past simple chatbots and Retrieval-Augmented Generation (RAG). Today, we build "Agents"—autonomous Python scripts that can browse the web, execute code, and collaborate with other agents to solve open-ended problems. Because Python is the "native tongue" of Large Language Models (LLMs), it has become the glue holding the modern AI stack together.

Section 2: Breaking the Chains — The No-GIL Revolution

The biggest headline of 2026 is undoubtedly the stabilization of the free-threaded build in Python 3.14. For the first time, developers can officially opt-out of the Global Interpreter Lock (GIL), the infamous mechanism that prevented Python from executing multiple threads of bytecode simultaneously.

The 3.1x Speedup Milestone

In our current version, Python 3.14.3, the --disable-gil flag has reached a level of maturity that is seeing widespread adoption in scientific computing and heavy data processing. Benchmarks for CPU-bound tasks, such as complex mathematical simulations or image processing, are showing a 3.1x speedup on 4-core systems compared to the legacy Python 3.13 builds.

To visualize this, imagine the GIL as a "one-way bridge" where only one car can cross at a time, regardless of how many lanes are available. The No-GIL effort has effectively upgraded that bridge into a multi-lane highway.

The "Single-Threaded Tax"

However, this performance doesn't come for free. One of the most discussed topics in the March 2026 developer forums is the "Single-Threaded Tax." Because the free-threaded build requires more complex reference counting (to ensure memory safety across multiple threads), single-threaded code actually runs 10% to 40% slower when the GIL is disabled.

This is why, for the majority of web developers building standard APIs or CRUD apps, the GIL remains enabled by default. The choice now lies with the developer: do you need massive parallel throughput, or do you need the fastest possible single-thread execution?

Code Example: Parallel Execution in 3.14.3

Here is how we leverage the new multi-core capabilities in a CPU-bound scenario using the concurrent.futures module, assuming you are running the free-threaded build:

pythonimport time from concurrent.futures import ThreadPoolExecutor def heavy_computation(n): """A CPU-intensive task that benefits from No-GIL.""" result = 0 for i in range(n): result += (i ** 2) % 1234567 return result def run_parallel_test(): # In Python 3.14.3 (No-GIL), these threads now run # on separate CPU cores simultaneously. numbers = [10_000_000] * 8 start_time = time.perf_counter() with ThreadPoolExecutor(max_workers=8) as executor: results = list(executor.map(heavy_computation, numbers)) end_time = time.perf_counter() print(f"Computed {len(results)} tasks in {end_time - start_time:.4f} seconds") if __name__ == "__main__": # Ensure you are running with the --disable-gil flag to see # true parallel execution. run_parallel_test()

Section 3: The Rust-ification of Tooling

If you are still using pip to manage your packages in 2026, you are likely in the minority. The Python ecosystem has undergone a massive "Rust-ification," where the most critical developer tools have been rewritten in Rust for near-instant performance.

The Reign of uv and ruff

The tool uv has officially become the industry standard for package management. It has replaced pip, pip-tools, and poetry for most professional teams. Because uv is written in Rust, it can resolve dependencies and install packages in milliseconds—tasks that used to take minutes.

Similarly, ruff has replaced the entire linting and formatting stack (Flake8, Black, isort). In 2026, we no longer wait for CI/CD pipelines to tell us we missed a trailing comma; the feedback loop is now instantaneous.

| Tool (Old World) | 2026 Standard (Rust-based) | Performance Impact |

|---|---|---|

pip / poetry | uv | 10x - 100x faster installs |

flake8 / black | ruff | Near-instant linting/formatting |

virtualenv | uv venv | Instant environment creation |

pip-audit | uv tree --outdated | Integrated security & resolution |

The Impact on CI/CD

In 2026, the average "build time" for a Python microservice has dropped by nearly 80%. This has fundamentally changed how we work. We no longer hesitate to add a dependency or run a full linting suite on every save because there is no perceived "cost" in time.

Section 4: "Vibe Coding" and the Agentic Shift

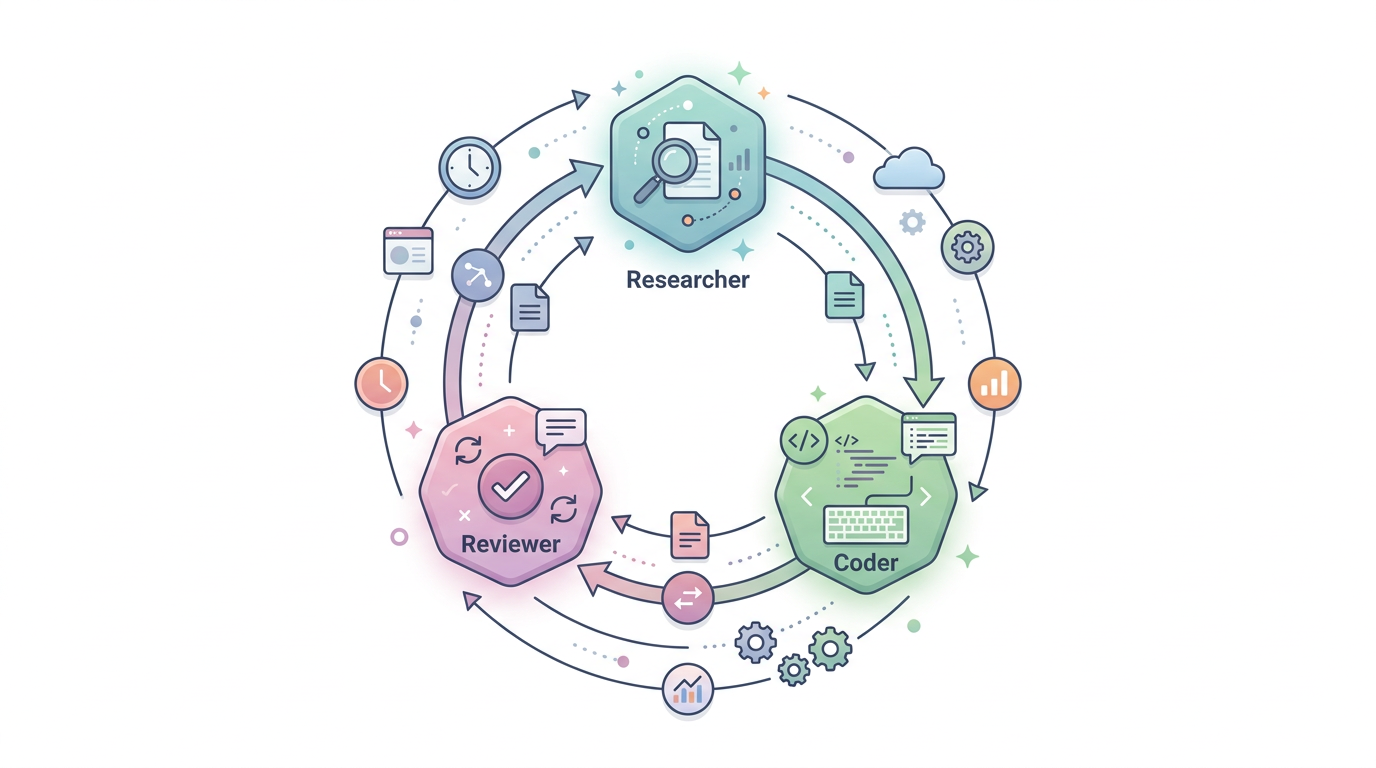

The term "Vibe Coding" was coined early in 2026 to describe the high-level orchestration of AI agents. In this paradigm, Python is rarely written "line by line" by humans for boilerplate logic. Instead, we use frameworks like LangGraph, CrewAI, and AutoGen to coordinate teams of autonomous agents.

Why Python is the "LLM's Native Tongue"

Python’s syntax is incredibly close to natural language, which makes it the most predictable and accurate language for AI models to generate. When an AI "thinks" in code, it thinks in Python. This has led to a shift where the developer’s role is now more akin to a System Architect or a Prompt Engineer than a traditional "coder."

Code Example: Agentic Orchestration with LangGraph

Here is a simplified look at how we define a multi-agent "vibe" in 2026. This script coordinates a "Researcher" agent and a "Writer" agent to produce a technical report.

pythonfrom typing import TypedDict, Annotated from langgraph.graph import StateGraph, END class AgentState(TypedDict): """The state shared between our agents.""" task: str research_notes: str final_report: str iteration_count: int def researcher_node(state: AgentState): """Agent that simulates deep research.""" print("--- RESEARCHER STARTING ---") # In a real 2026 workflow, this would call a search API state["research_notes"] = "Key findings on Python 3.14 performance..." return state def writer_node(state: AgentState): """Agent that drafts the final output.""" print("--- WRITER STARTING ---") state["final_report"] = f"Report based on: {state['research_notes']}" return state # Initialize the Graph workflow = StateGraph(AgentState) # Add our Agents as nodes workflow.add_node("researcher", researcher_node) workflow.add_node("writer", writer_node) # Define the flow workflow.set_entry_point("researcher") workflow.add_edge("researcher", "writer") workflow.add_edge("writer", END) # Compile and Run app = workflow.compile() final_state = app.invoke({"task": "Write a report on Python 2026", "iteration_count": 0}) print(f"Final Output: {final_state['final_report']}")

This shift toward Agentic AI means that Python is no longer just for data science or web backends; it is the operating system for AI-driven automation.

Section 5: The Roadmap — Python 3.15 and the JIT Future

As of March 2026, we are currently looking at Python 3.15 Alpha 6. While 3.14 was about the GIL, 3.15 is about the JIT (Just-In-Time) Compiler.

The Copy-and-Patch JIT

The JIT compiler introduced in 3.13 has matured significantly. In the current 3.15 alpha builds, we are seeing a 5–10% geometric mean speedup across the board. The goal for the final release in October 2026 is "Tier 2" optimization, which aims to make the JIT nearly zero-overhead.

Default UTF-8 (PEP 686)

Another massive quality-of-life change in 3.15 is that UTF-8 is now the default encoding across all platforms, including Windows. This finally puts an end to the "UnicodeDecodeError" nightmares that have plagued Windows-based Python developers for decades.

The "Dead Battery" Cleanup

Python 3.14 officially completed the removal of "dead batteries" (PEP 594)—legacy modules like telnetlib and cgi that had been deprecated for years. The result is a leaner, more secure standard library that is easier to maintain and faster to initialize.

Practical Application: How to Stay Relevant in 2026

If you want to excel in this new era, you must shed the habits of the 2020s. Here are the practical steps every Python developer should take today:

- Stop using

pipandvenvmanually. Switch touv. It is safer, faster, and handles Python version management (likepyenv) automatically. - Don't disable the GIL by default. Only use the

--disable-gilbuild for specific microservices that are CPU-bound and require high-concurrency. For standard web APIs (FastAPI, Django), the standard build is still faster due to lower single-thread overhead. - Master "Agentic" Frameworks. Learn how to use LangGraph or CrewAI. The most valuable Python developers in 2026 aren't the ones who can write the fastest loops, but the ones who can design the most resilient agent architectures.

- Embrace Type Hinting. With AI generating most of our code, Type Hints (via

pydanticortyping) act as the "guardrails" that keep AI-generated logic from breaking. - Explore Mobile Python. With Python now a Tier 3 supported platform for Android and iOS, tools like BeeWare have made it viable to share business logic between your backend and your mobile apps.

Common Mistakes to Avoid

- Over-optimizing for speed: With the JIT and No-GIL, developers are tempted to write complex multi-threaded code. Remember: Readability still counts. Only optimize when the profiler (use the new PEP 799 statistical profiler!) tells you to.

- Ignoring the "Rust-ification": Some legacy projects still cling to slow, C-based extensions. When possible, look for Rust-based alternatives (like

polarsinstead ofpandasfor large datasets) to maximize performance.

Final Thoughts

As we move through the first half of 2026, it’s clear that Python has achieved a rare feat in the technology world: it has reinvented itself without losing its soul. It remains the most beginner-friendly language on the planet, yet it now possesses the raw power to compete with systems-level languages in multi-core environments.

The "Python Renaissance" isn't just about technical milestones like the No-GIL build or the JIT compiler. It’s about a change in the developer's identity. We are no longer just "coding"; we are orchestrating. We are using Python to build brains for machines, to automate the mundane, and to push the boundaries of what is possible with parallel computing.

Whether you are "Vibe Coding" your first startup idea or migrating a massive enterprise microservice to Python 3.14.3, the message is clear: Python’s best days aren't in the past—they are happening right now.